We recently had the pleasure of hosting a Juno Learning Online CPD session on Demystifying AI with Dr Andrew Chen exploring how AI actually works, where it can go wrong, and what responsible use looks like for in-house legal teams.

Ngā mihi nui to Dr Andrew Chen for sharing his practical, hype-free expertise so generously, drawing on his deep experience in AI and machine learning.

Juno Lawyer Monique van Bellen shares her key takeaways with us, capturing insights from a session that focused on clarity, context, and confidence when engaging with AI.

Demystifying AI

Dr Andrew Chen’s session offers some great insight into AI, explaining what it is, how the different types of models work and tips and tricks to get the most out of using AI, along with key cautions about the limitations of AI and how to use it responsibly. The session not only demystified AI's potential but also illuminated the pitfalls to avoid and its inherent constraints to enable more confident usage of AI.

What is AI?

LLMs are often brilliant with nuance but can also be confidently wrong. | ||

AI seeks to emulate human processes; it perceives information, recognises patterns and communicates. The outputs of AI reflect patterns in data and make logical assumptions based on those patterns; it is not necessarily an objective reality.

Types of AI models

Rule-based models are the most reliable, predictable and traditional of the models. With these models, you say if X, then Y, even if X is difficult to assess. The difficulty with these models is that significant human intervention is required to describe the rules. If you already have human-defined rules, then rule-based models are useful to automate the process.

Probabilistic models learn from data and handle ambiguities. Almost all modern AI systems in use today are probabilistic, meaning they provide the most likely answer based on training data, not necessarily the only or perfectly accurate answer.

Generative AI aims to emulate human tasks of perception, cognition, and communication. Unlike older "discriminative" or "classification" AI models that might tell you if a picture is a cat or a dog, generative AI can "make you a picture of a cat." It creates something new based on its training.

The technology behind major generative AI systems like ChatGPT, Copilot, or Gemini is called Large Language Models (LLMs). These are trained on vast amounts of human writing, and because generative AI is trained on human-created data, it reflects human assumptions and biases. They are often brilliant with nuance but can also be confidently wrong, offering a best guess without indicating that it is a guess. This is an important limitation to be mindful of when using generative AI.

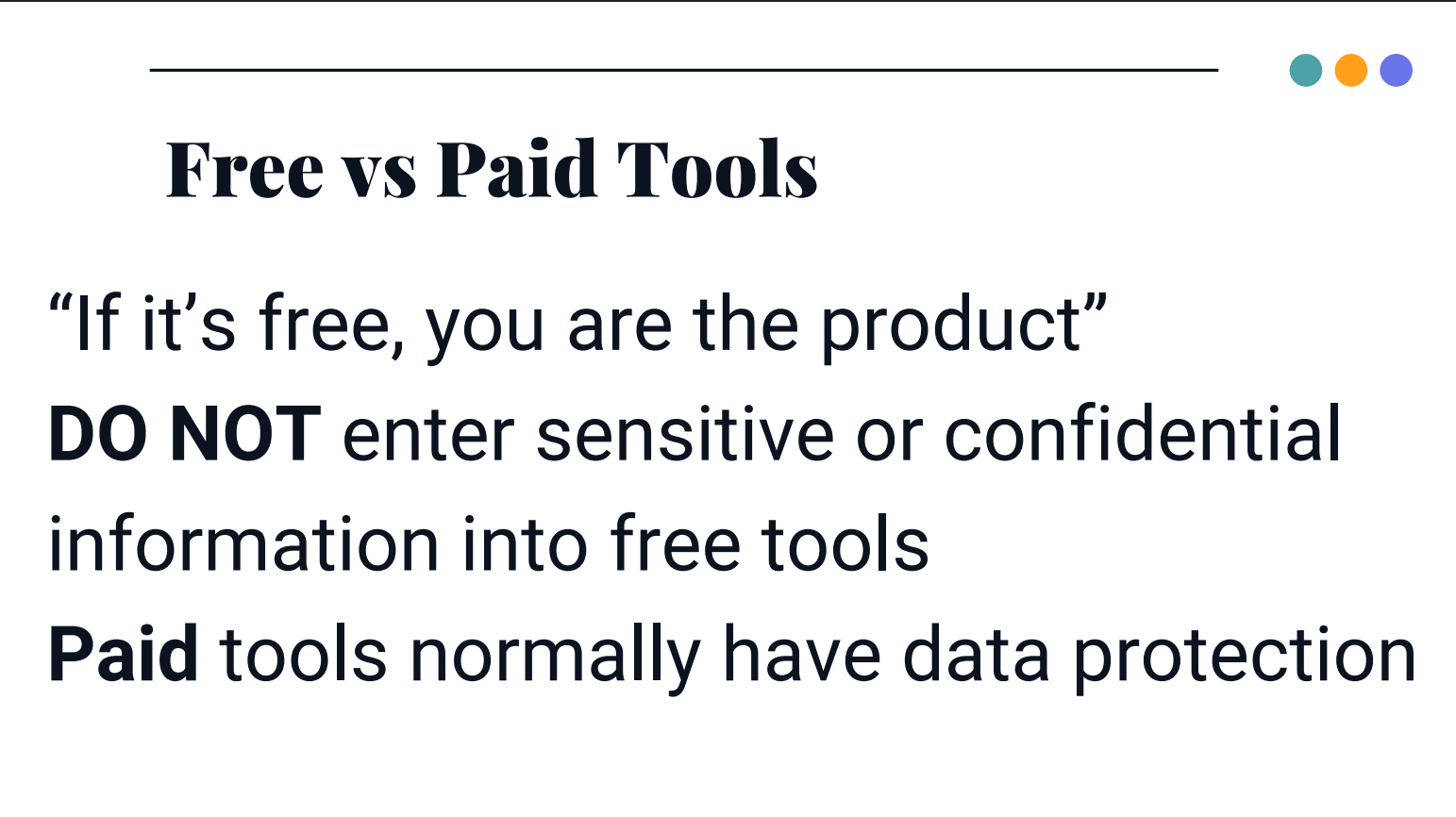

Free vs paid AI tools

Andrew highlighted important differences between the use of free and paid AI tools. With free AI tools, any data entered may be used for training and can be made public, so they are not suitable for confidential or personal information.

In comparison with paid AI tools, you can have contractual protection that the data inputted isn’t used for training, which is safer, but Andrew still recommends avoiding highly sensitive material.

Limitations of AI

Andrew raised some important limitations on the use of AI, these include:

- Probabilistic nature and inaccuracy: AI systems are not 100% accurate due to their probabilistic design. ~30% of genAI responses contain errors.

- Hallucinations: AI can "hallucinate" or confidently present incorrect information as fact.

- Bias in training data: AI models reflect the assumptions and biases present in their training data.

- Data privacy and confidentiality: Entering sensitive or confidential information into free AI tools is risky, as the data may be stored and reused for purposes beyond your control.

- Lack of legal privilege and discoverability: AI-generated material is generally not legally privileged and can be discoverable under laws like the Official Information Act.

Tips for using AI successfully

Andrew provided several useful tips to assist with getting the most out of AI, such as:

- Understand the nature of AI: most modern AI systems are probabilistic, meaning they provide the most likely answer based on training data, not necessarily the only or perfectly accurate answer.

- Be specific and provide context in prompts: treat AI like a junior lawyer, provide ample context, use full sentences not bullet points, define the desired output format, specify the target audience, and constrain the data sources.

- Leverage memory: AI models have "memory" that allows them to recall previous interactions within a conversation. Use this to refine responses and build on earlier prompts.

- Consider using meta-tools (such as Perplexity): These tools are useful for research, as they work across multiple AI models and help select the most appropriate one.

- Experiment and practice: The best way to learn is by doing, actively using AI tools to understand what works and what doesn't.

- Treat your data with care, especially when using free tools. As Andrew put it, don’t leave your information on the bus.

Ethics and sustainability

Andrew also addressed concerns about the use of AI from both ethical and sustainability perspectives. Ethical standards around the use of AI are still developing, so it’s difficult to assess how moral or ethical an AI model is. From a sustainability perspective, AI can be energy-intensive, although Andrew noted that these impacts are often discussed without context and should be considered alongside other large-scale digital technologies.

A balanced view and a reassuring takeaway

Andrew’s parting reassurance was that feeling unsure, or worried about being left behind by AI, is completely normal. Learning AI is a skill, and the only real way to build it is by using the tools and seeing what works. Much of the hype we hear in the news about AI’s “magic” comes from cherry-picked examples or tools that aren’t widely available yet.

If you’re getting useful outcomes, you’re doing just fine. Once the mystery fades, AI becomes just another tool, and far less daunting, with a bit of understanding and care.

Ngā mihi nui to Andrew and to everyone who joined the session for your thoughtful engagement. It’s always a privilege to learn alongside our in-house legal community.

In the spirit of being open and generous, here are some ways keep learning and connected:

Watch the recording of Demystifying AI with Andrew Chen available on our website in News and Resources

Subscribe to Juno Communications to stay connected with future Juno Learning CPD sessions and insights for in-house lawyers